The vocabulary you'll hit when you read about AI in the wild — terms used in product release notes,

tech press, and water-cooler conversations. Skim it once; come back when something doesn't make sense.

- LLM (Large Language Model)

- The neural network behind ChatGPT, Claude, Gemini, and similar tools. Trained on huge amounts of text to predict the next word, which — at scale — turns into reading, writing, reasoning, and coding ability.

- Token

- The unit of text a model reads and writes — roughly a chunk of three or four characters or about three-quarters of a word. "AI" is one token; "antidisestablishment" is several. Pricing and context limits are usually measured in tokens.

- Context Window

- The maximum amount of text the model can hold in mind at once — the prompt plus the response plus any attachments. Once you exceed it, earliest content gets dropped. Modern windows range from ~32k to 1M+ tokens.

- Prompt

- Whatever you send to the model — a question, an instruction, a document, an image. The quality of your prompt is the single biggest factor in the quality of the response.

- System Prompt

- A persistent instruction the tool applies before every message in a conversation, setting role, tone, or rules. Custom Instructions, Claude Styles, and Gemini Saved Info are flavors of this.

- Hallucination

- When the model confidently states something false. Names, citations, statistics, and quotes are the most common offenders. The fix isn't trust — it's verification, web search, or grounding the answer in a document you provided.

- Grounding

- Tying the model's answer to a specific source — a document you uploaded, a search result, a database. Grounded answers cite back to the source, dramatically reducing hallucinations.

- RAG (Retrieval-Augmented Generation)

- A pattern where the system first searches a knowledge base for relevant chunks, then hands those to the model along with your question. How most "chat with your documents" features work under the hood.

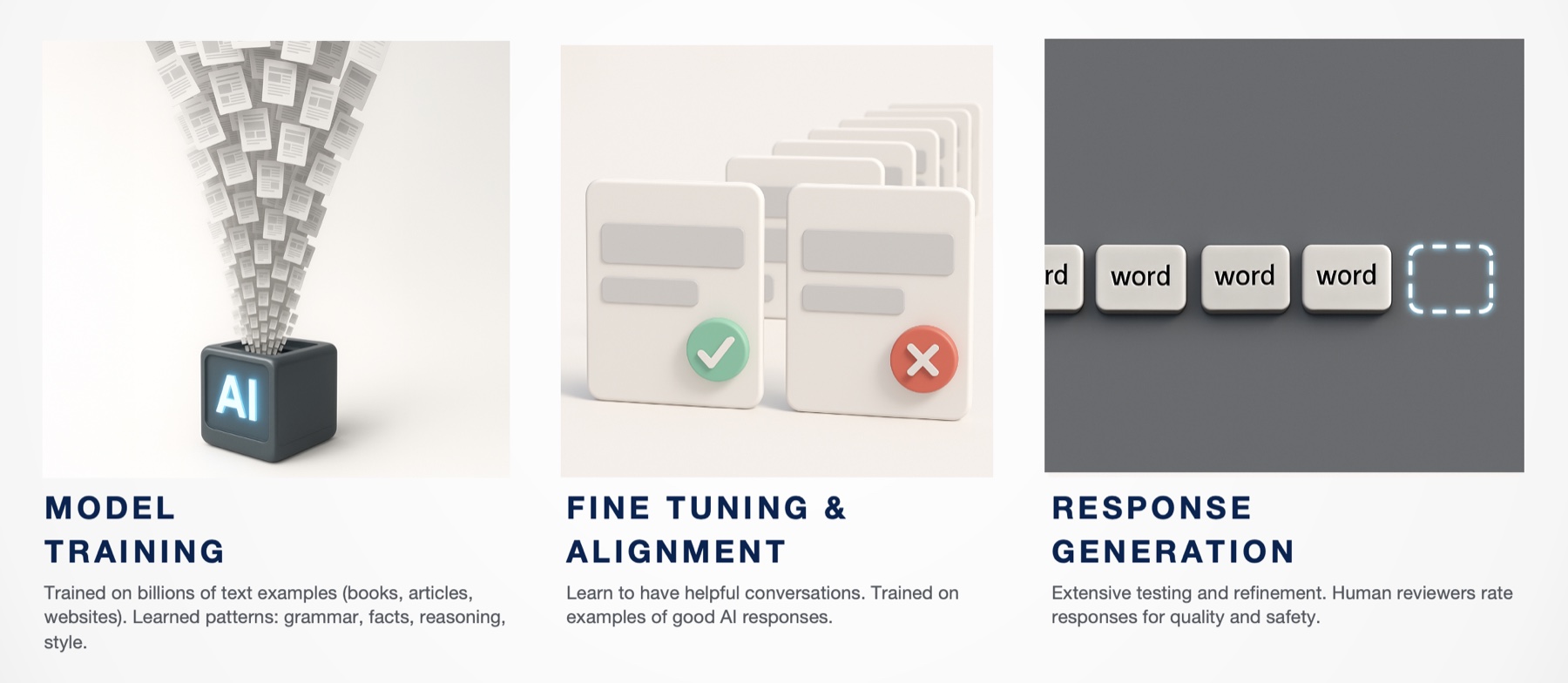

- Fine-tuning

- Further training of a model on a custom dataset to specialize it. Less common than it used to be — for most tasks, prompting and RAG do the job without the cost of fine-tuning.

- Inference

- A single run of the model — your prompt in, the model's answer out. "Inference cost" is what you pay per call; "inference latency" is how long the model takes to respond.

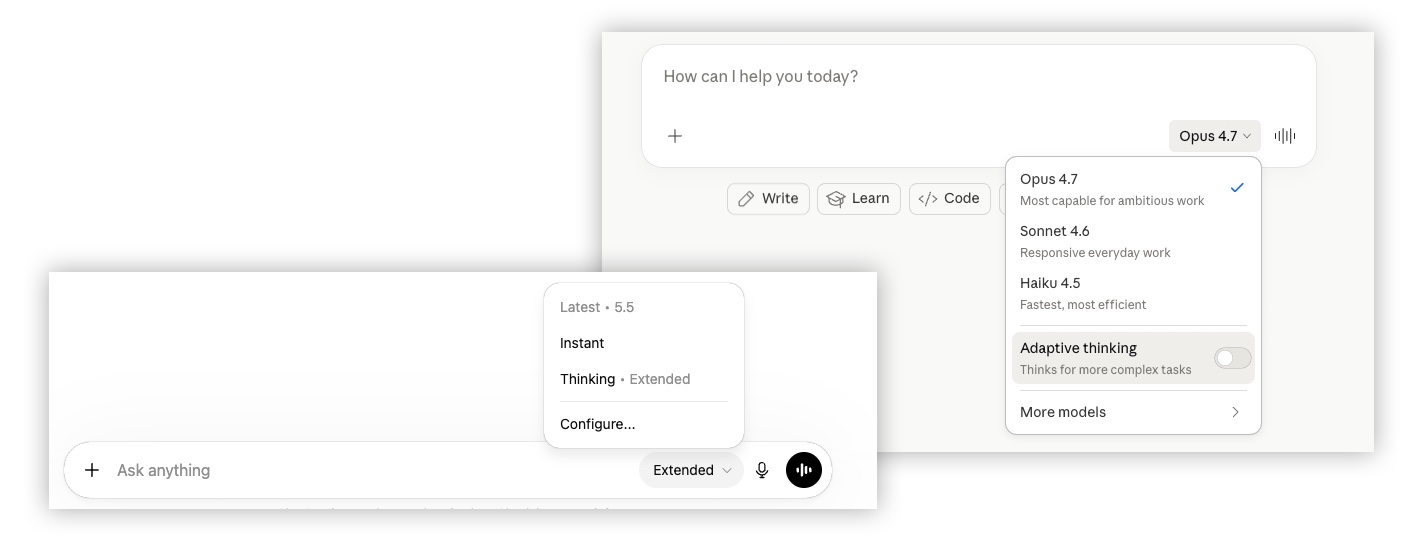

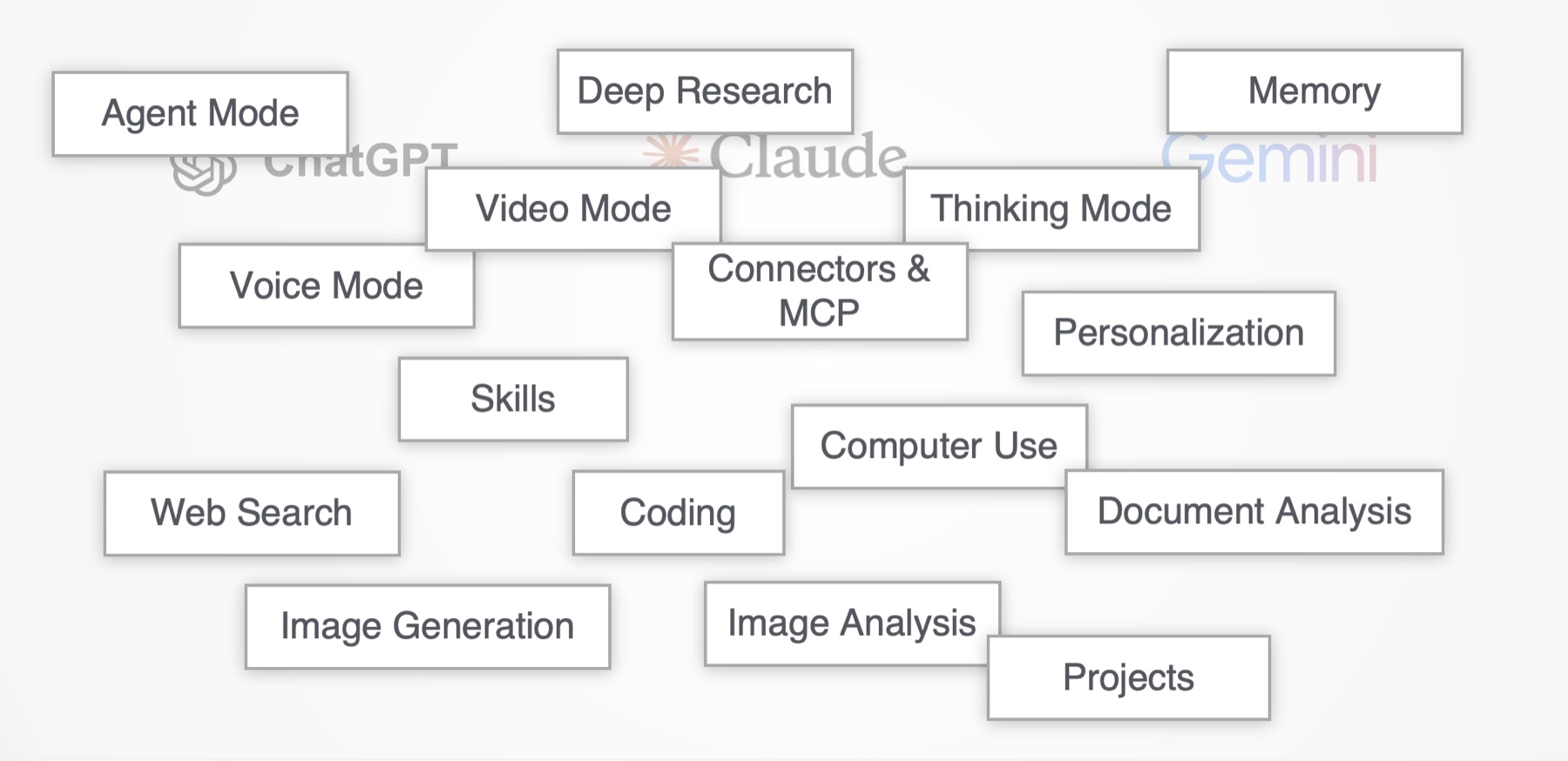

- Reasoning / Thinking Model

- A model that visibly works through a problem step-by-step before answering, trading time for accuracy on complex tasks. Claude Extended Thinking, ChatGPT o-series, Gemini Deep Think, Grok Think.

- Agent

- A model setup that doesn't just respond — it plans, takes actions, observes results, and iterates. Think "the AI books the flight" rather than "the AI tells you which flight to book."

- Tool Use / Function Calling

- The model's ability to call external tools (a search engine, a calculator, your calendar API) instead of guessing. The mechanism that turns a chat model into an agent.

- MCP (Model Context Protocol)

- An open standard for connecting AI assistants to outside data sources and tools — Gmail, Notion, Slack, GitHub, your database. Anthropic introduced it; most major vendors now support it.

- Multimodal

- A model that handles more than just text — images, audio, video, code. Modern flagships (GPT-5, Claude 4, Gemini 2.5) are all multimodal in and out.

- Embedding

- A numerical representation of a chunk of text that captures its meaning. Two pieces of text with similar meaning have similar embeddings. The math behind semantic search and most RAG systems.

- Temperature

- A knob that controls how predictable the model's output is. Low temperature = same answer every time, sticks to safe choices. Higher temperature = more variety, more creativity, more risk of weirdness.

- Knowledge Cutoff

- The date the model's training data ends. Without web search, the model genuinely doesn't know about anything that happened after that date — and may confidently make things up if you ask.

- Prompt Injection

- A class of attacks where hostile instructions are hidden in content the model reads (a webpage, an email, a PDF) and tries to override what you actually asked. Why agents and connectors are designed cautiously.

- Open Weights vs. Closed Weights

- Open-weights models (Llama, DeepSeek, Mistral) have their parameters published — you can run them yourself. Closed-weights models (GPT, Claude, Gemini) are accessed only through the vendor's API or product.

- Vibe-coding

- Building software primarily by describing what you want in natural language to an AI coding tool, accepting and iterating on what it produces, rather than writing each line yourself. Cursor, Claude Code, GitHub Copilot are common stacks.

![RTCF prompting framework template — Role: You are a [specific expert with clear expertise]. Task: Your goal is to [verb-forward instruction]. Context: Here's what you need to know [detailed background]. Format: Structure your response as [specific format with examples].](/images/rtcf-framework.jpg)